What's Wrong With Modern Macro? Part 5

Part 5 in a series of posts on modern macroeconomics. In this post, I will describe some of the issues with the Hodrick-Prescott (HP) filter, a tool used by many macro models to isolate fluctuations at business cycle frequencies.

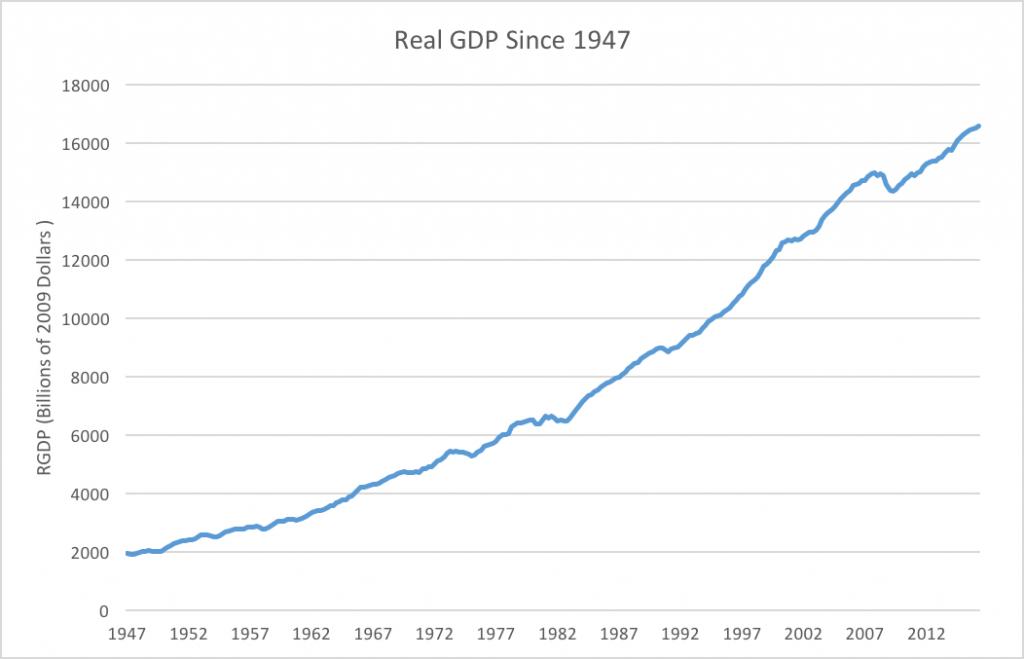

A plot of real GDP in the United States since 1947 looks like this

Real GDP in the United States since 1947. Data from FRED

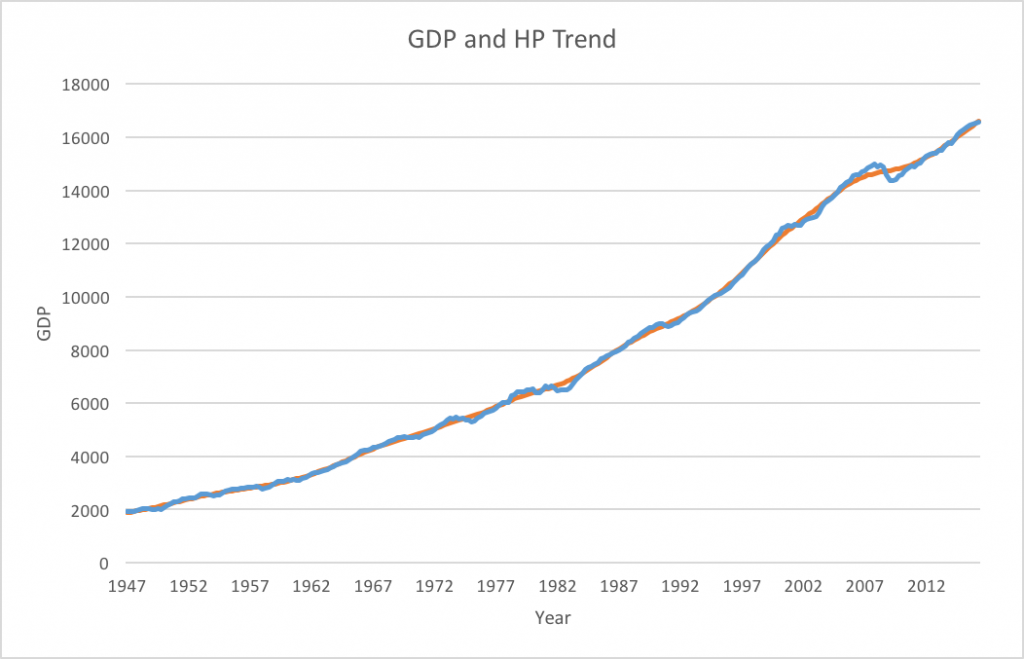

Although some recessions are easily visible (like 2009), the business cycle is not especially easy to see. The growing trend of economic growth over time dominates more frequent business cycle fluctuations. While the trend is important, if we want to isolate more frequent business cycle fluctuations, we need some way to remove the trend. The HP Filter is one method of doing that. Unlike the trends in my last post, which simply fit a line to the data, the HP filter allows the trend to change over time. The exact equation is a bit complex, but here's the link to the Wikipedia page if you'd like to see it. Plotting the HP trend against actual GDP looks like this

Real GDP against HP trend (smoothing parameter = 1600)

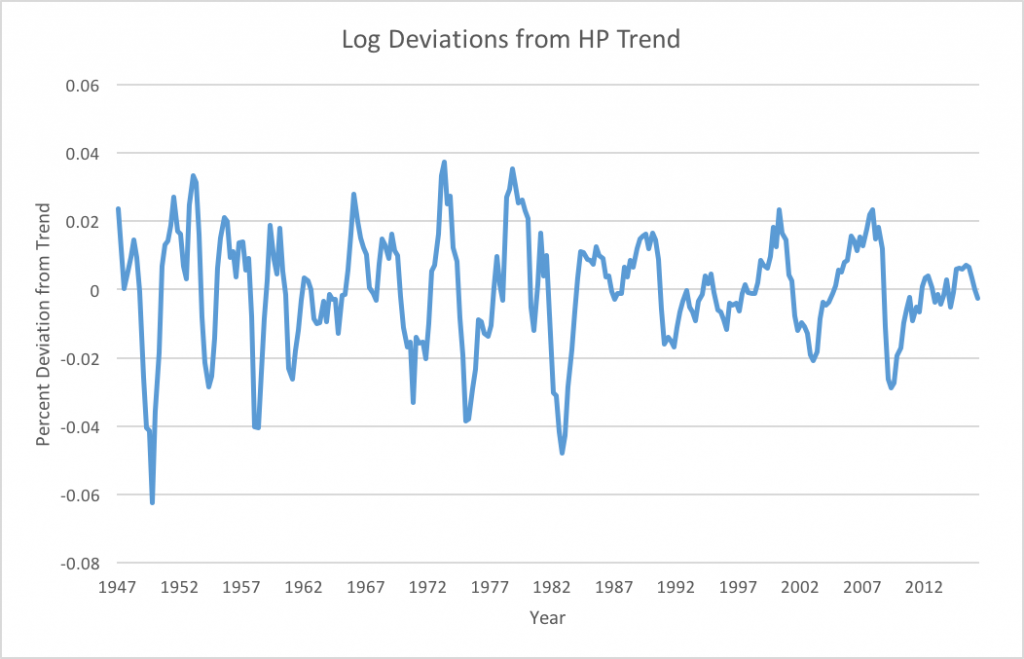

By removing this trend, we can isolate deviations from trend. Usually we also want to take a log of the data in order to be able to interpret deviations in percentages. Doing that, we end up with

Deviations from HP trend

The last picture is what the RBC model (and most other DSGE models) try to explain. By eliminating the trend, the HP filter focuses exclusively on short term fluctuations. This shift in focus may be an interesting exercise, but it eliminates much of what make business cycles important and interesting. Look at the HP trend in recent years. Although we do see a sharp drop marking the 08-09 recession, the trend quickly adjusts so that we don't see the slow recovery at all in the HP filtered data. The Great Recession is actually one of the smaller movements by this measure. But what causes the change in the trend? The model has no answer to this question. The RBC model and other DSGE models that explain HP filtered data cannot hope to explain long periods of slow growth because they begin by filtering them away.

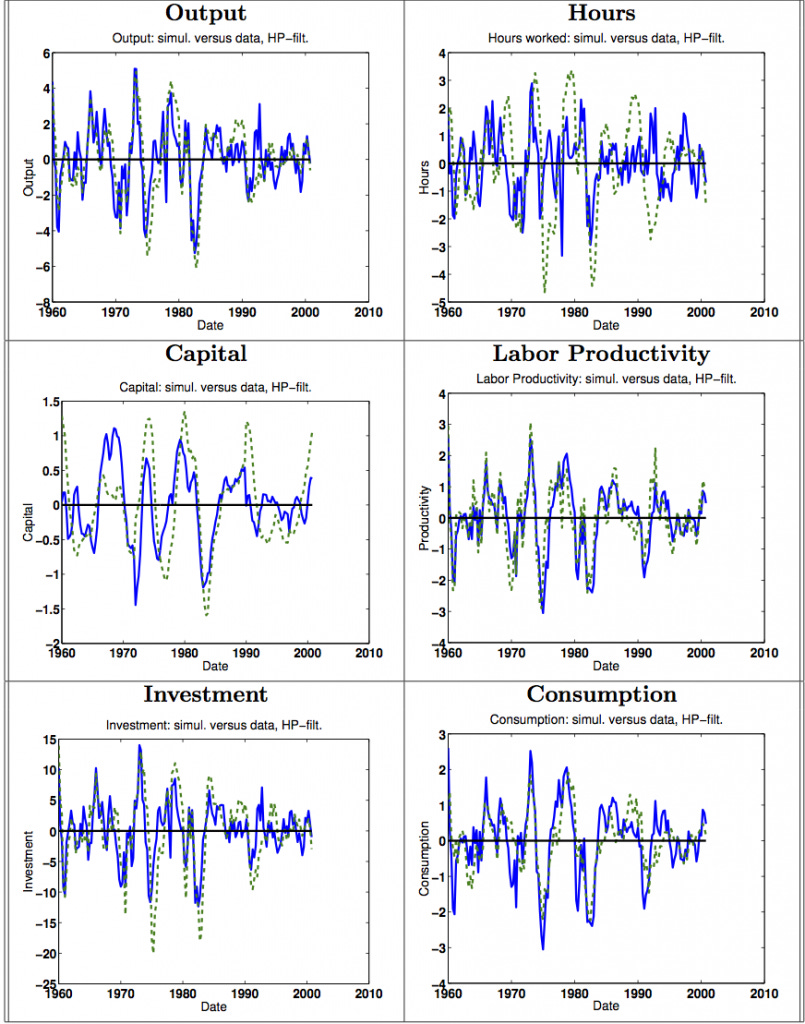

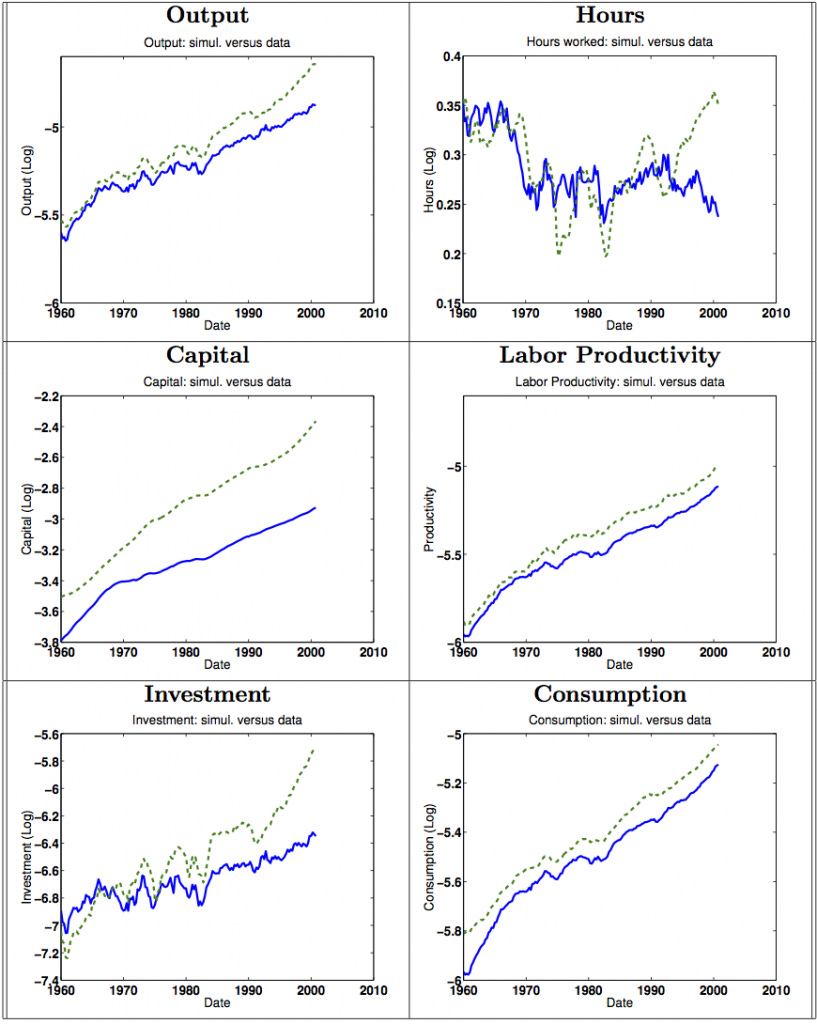

Let's go back one more time to these graphs

Source: Harald Uhlig (2003): How well do we understand business cycles and growth? Examining the data with a real business cycle model.

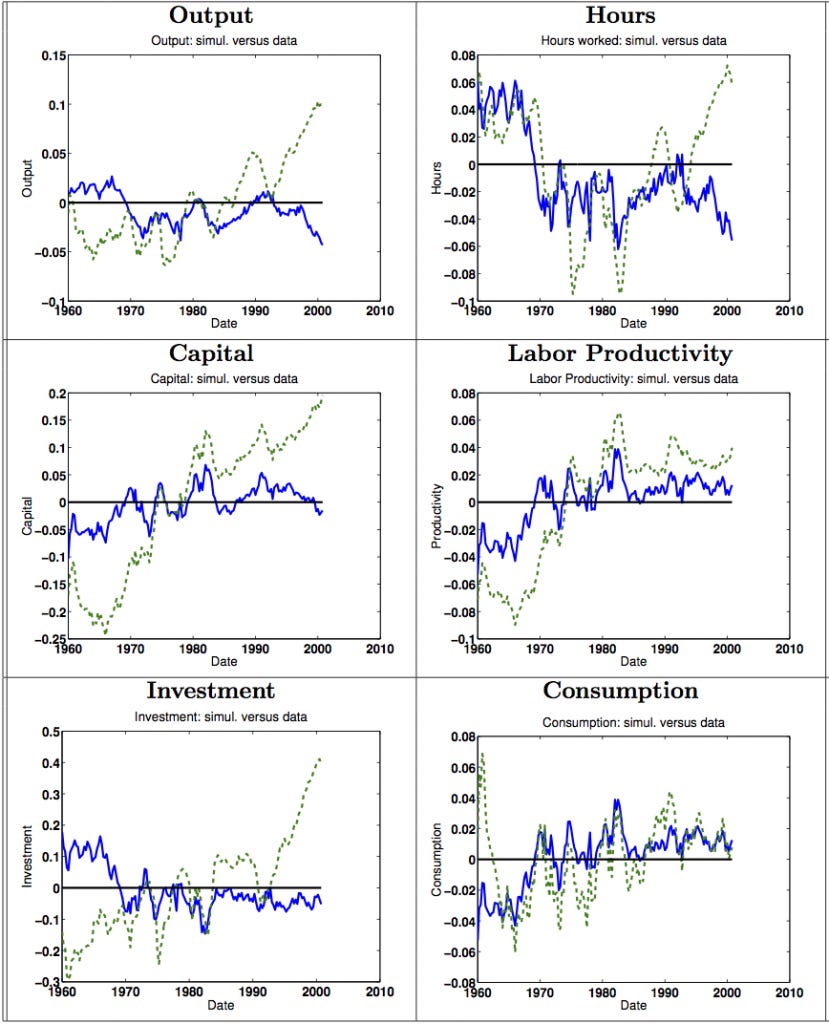

Notice that these graphs show the fit of the model to HP filtered data. Here's what they look like when the filter is removed

Source: Harald Uhlig (2003): How well do we understand business cycles and growth? Examining the data with a real business cycle model.

Not so good. And it gets worse. Remember we have already seen in the last post that TFP itself was highly correlated with each of these variables. If we remove the TFP trend, the picture changes to

Source: Harald Uhlig (2003): How well do we understand business cycles and growth? Examining the data with a real business cycle model.

Yeah. So much for that great fit. Roger Farmer describes the deceit of the RBC model brilliantly in a blog post called Real Business Cycle and the High School Olympics. He argues that when early RBC modelers noticed a conflict between their model and the data, they didn't change the model. They changed the data. They couldn't clear the olympic bar of explaining business cycles, so they lowered the bar, instead explaining only the "wiggles." But, as Farmer contends, the important question is in explaining those trends that the HP filter assumes away.

Maybe the most convincing reason that something is wrong with this method of measuring business cycles is that measurements of the cost of business cycles using this metric are tiny. Based on a calculation by Lucas, Ayse Imrohoroglu explains that under the assumptions of the model, an individual would need to be given $28.96 per year to be sufficiently compensated for the risk of business cycles. In other words, completely eliminating recessions is worth about as much as a couple decent meals. Obviously to anyone who has lived in a real economy this number is completely ridiculous, but when business cycles are relegated to the small deviations from a moving trend, there isn't much to be gained from eliminating the wiggles.

There are more technical reasons to be wary of the HP filter that I don't want to get into here. A recent paper called "Why You Should Never Use the Hodrick-Prescott Filter" by James Hamilton, a prominent econometrician, goes into many of the problems with the HP filter in detail. He opens by saying "The HP filter produces series with spurious dynamic relations that have no basis in the underlying data-generating process." If you don't trust me, at least trust him.

TFP doesn't measure productivity. HP filtered data doesn't capture business cycles. So the RBC model is 0/2. It doesn't get much better from here.